📘 Introduction & Overview

🔍 What is Sensor Data Streaming?

Sensor Data Streaming refers to the real-time or near-real-time transmission of data generated by physical sensors (temperature, motion, humidity, etc.) to a centralized system (e.g., cloud, edge node, or application backend) for processing, monitoring, and decision-making.

In DevSecOps, this streaming can be used to:

- Monitor infrastructure metrics (e.g., CPU heat sensors, network congestion sensors).

- Enhance security observability (e.g., biometric sensor logs, intrusion detection sensors).

- Automate incident detection and response workflows.

🧭 History or Background

- Early use (2000s): In industrial IoT systems and smart factories using SCADA.

- Evolution (2010s): Adoption in smart homes, health tech, and connected cars.

- Modern era (2020s): Now integrated with cloud-native pipelines, edge computing, and DevSecOps tools for proactive monitoring, analytics, and alerts.

🔐 Why is it Relevant in DevSecOps?

- Security Monitoring: Real-time sensor streams detect anomalies (e.g., unauthorized access).

- Ops Reliability: Environmental sensor data helps predict and prevent outages.

- Automation: Triggers pipelines when specific environmental or operational thresholds are met.

- Audit & Compliance: Maintains logs from physical environments for compliance (e.g., HIPAA, ISO 27001).

🧠 Core Concepts & Terminology

📖 Key Terms and Definitions

| Term | Definition |

|---|---|

| Sensor | A physical device that detects and responds to input from the physical environment. |

| Stream Processing | Handling data in motion, as it is generated, rather than after it’s stored. |

| Telemetry | Automatic measurement and wireless transmission of data. |

| Edge Computing | Processing data closer to the source (e.g., sensor gateways). |

| Data Broker | Middleware component like Kafka or MQTT that transfers sensor data. |

🔄 How It Fits into the DevSecOps Lifecycle

| DevSecOps Phase | Sensor Streaming Role |

|---|---|

| Plan | Define what sensors are critical for security/operations. |

| Develop | Build integrations that consume and act on sensor data. |

| Build | Incorporate validation of sensor stream simulators. |

| Test | Run chaos/security tests triggered by live sensor input. |

| Release | Deploy pipelines that use streaming events for deployment triggers. |

| Monitor | Visualize sensor data in dashboards like Grafana. |

| Respond | Trigger alerts/incidents from anomaly-detected streams. |

🏗️ Architecture & How It Works

⚙️ Components

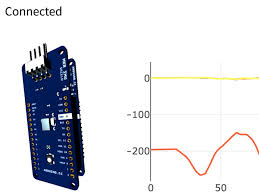

- Sensors – Devices producing continuous data.

- Edge/Gateway – Lightweight processors (e.g., Raspberry Pi, Arduino) aggregating raw data.

- Message Broker – Systems like Apache Kafka, MQTT, or AWS IoT Core to transport data.

- Stream Processor – Real-time analytics using Apache Flink, AWS Kinesis, or Spark Streaming.

- DevSecOps Platform – CI/CD tools like Jenkins, GitHub Actions, or ArgoCD reacting to stream events.

- Dashboards & Alerting – Tools like Grafana, Prometheus, ELK Stack.

🧩 Architecture Diagram (Described)

[Sensor Devices] → [Edge Gateway] → [Message Broker (e.g., Kafka)] →

[Stream Processor (Flink/Kinesis)] →

[DevSecOps System (AlertManager / GitHub Actions)] →

[Dashboard / Alerts / Automated Deployments]

☁️ Integration Points with DevSecOps Tools

| Tool | Integration |

|---|---|

| Jenkins | Use Kafka plugins to trigger builds from sensor events. |

| GitHub Actions | Trigger workflows via MQTT webhooks. |

| ArgoCD | Auto-deploy when temperature or health sensors meet thresholds. |

| Prometheus + Grafana | Scrape and visualize time-series sensor data. |

| ELK Stack | Store and search historical sensor event logs for security audit. |

🛠️ Installation & Getting Started

🧰 Prerequisites

- Node or Raspberry Pi with sensor connected

- Kafka / MQTT broker setup

- Python or Node.js

- Docker (for containerized streaming stack)

- Basic DevSecOps pipeline (GitHub Actions, Jenkins, etc.)

👣 Step-by-Step Beginner-Friendly Setup Guide

1. Install a Temperature Sensor (e.g., DHT11) on Raspberry Pi

sudo apt-get update

sudo apt-get install python3-pip

pip3 install Adafruit_DHT

2. Publish Sensor Data to MQTT Broker

import Adafruit_DHT

import paho.mqtt.client as mqtt

import time

sensor = Adafruit_DHT.DHT11

pin = 4

client = mqtt.Client()

client.connect("broker.hivemq.com", 1883, 60)

while True:

humidity, temperature = Adafruit_DHT.read_retry(sensor, pin)

if humidity and temperature:

client.publish("devsecops/sensors/temperature", f"{temperature}")

time.sleep(5)

3. Visualize in Grafana via MQTT → Telegraf → InfluxDB

- Use Telegraf MQTT input plugin to stream to InfluxDB

- Connect Grafana to InfluxDB and build dashboards

4. Trigger GitHub Actions from MQTT Messages

Use MQTT2Webhook to call:

on:

repository_dispatch:

types: [sensor_alert]

🧪 Real-World Use Cases

🔧 1. Data Center Cooling Control

- Sensors detect rack temperature spikes

- Stream to Kafka

- Trigger cooling system API or alert via Slack

🔐 2. Physical Intrusion Detection

- Motion or door sensors detect unauthorized movement

- Event streamed → GitHub Action → PagerDuty alert

🧬 3. Pharmaceutical Storage Monitoring

- Stream temperature & humidity from storage units

- Automatic rollback/deployment if unsafe storage detected

🚗 4. Automotive DevSecOps Testing

- Vehicle sensors report diagnostics

- Triggers simulations, software update pipelines

✅ Benefits & Limitations

🎯 Key Advantages

- Real-time insight into security and performance.

- Enables automated responses to physical-world changes.

- Improves compliance via secure sensor data tracking.

- Supports preventive maintenance and anomaly detection.

⚠️ Common Challenges

| Challenge | Description |

|---|---|

| Latency | Wireless sensors may cause data lag. |

| Security | Sensor endpoints can be exploited (man-in-the-middle). |

| Scalability | Thousands of sensors require distributed systems (Kafka clusters, etc.). |

| Data Validation | Faulty sensor data can trigger false actions. |

📏 Best Practices & Recommendations

- 🔐 Encrypt all sensor data in transit using TLS.

- 🔎 Use edge filtering to discard noise or invalid data.

- 📜 Implement audit logs for sensor-based triggers in pipelines.

- ⚙️ Enable auto-scaling in streaming systems like Kafka or Kinesis.

- 📋 Align with NIST 800-53, HIPAA, or GDPR for data compliance.

🆚 Comparison with Alternatives

| Approach | Pros | Cons |

|---|---|---|

| Sensor Data Streaming | Real-time, scalable, proactive automation | Complex setup, resource intensive |

| Polling APIs | Simple to implement | Delayed response, inefficient |

| Log Aggregation | Good for historical audit | Not suited for instant reaction |

✅ Choose Sensor Streaming if:

- You need instant event-based automation

- You’re working with physical systems (IoT/embedded)

❌ Avoid if:

- You don’t have continuous internet connectivity

- You need only post-event analysis

🔚 Conclusion

Sensor Data Streaming bridges the physical and digital world in DevSecOps, enabling real-time automation, risk detection, and compliance monitoring.

As industries move towards edge-native, secure, and intelligent DevOps, sensor streaming will become a cornerstone of proactive operations and infrastructure resilience.