Introduction

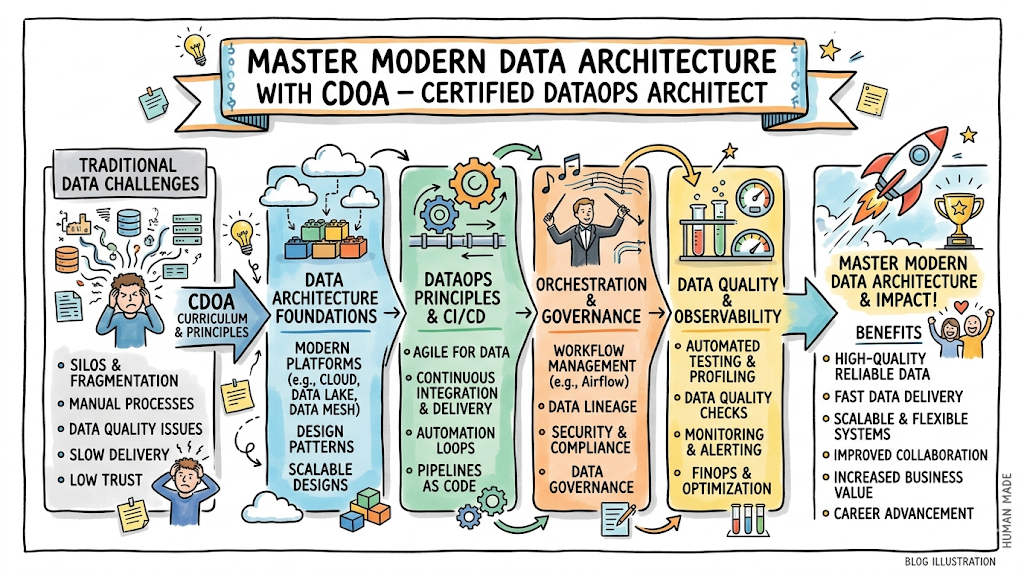

In the current landscape of platform engineering and cloud-native infrastructure, the CDOA – Certified DataOps Architect has emerged as a critical credential for professionals looking to bridge the gap between data engineering and operational excellence. This guide is designed for software engineers, site reliability engineers, and technical leaders who recognize that data is no longer just a backend concern but a core component of the delivery pipeline. By pursuing this path through DataOpsSchool, practitioners gain the architectural framework necessary to treat data pipelines with the same rigor, automation, and monitoring standards applied to modern software code. Understanding this certification helps professionals make better career decisions by aligning their technical skills with the high-demand field of automated data management and governance.

What is the CDOA – Certified DataOps Architect?

The CDOA – Certified DataOps Architect represents a shift from manual data handling to an automated, resilient, and scalable architectural approach. It exists to solve the persistent bottlenecks found in traditional data environments where silos between data scientists and operations teams often lead to project delays and fragile systems.

This certification focuses on production-ready learning, ensuring that architects can design systems that handle data ingestion, processing, and distribution with high availability. It aligns perfectly with modern engineering workflows by incorporating version control, continuous integration, and automated testing into the data lifecycle. For the enterprise, this means faster time-to-insight and a significant reduction in data-related errors.

Who Should Pursue CDOA – Certified DataOps Architect?

This certification is highly beneficial for a wide range of technical roles, including Data Engineers who want to operationalize their pipelines and SREs looking to expand their expertise into data reliability. Cloud professionals and platform engineers will find the architectural principles invaluable for building more robust service offerings.

Beginners with a strong interest in the intersection of data and DevOps can use this as a foundational roadmap, while experienced engineers can validate their expertise in complex data orchestration. Managers and technical leaders should pursue this to better understand the resource and tooling requirements of a modern DataOps team. This is globally relevant as companies in India and international markets transition toward data-driven decision-making at scale.

Why CDOA – Certified DataOps Architect is Valuable and Beyond

The demand for DataOps professionals is growing rapidly as enterprises realize that traditional data management cannot keep up with the volume and velocity of modern information. This certification provides longevity by focusing on architectural principles rather than just specific, ephemeral tools.

By mastering these concepts, professionals stay relevant even as underlying technologies evolve from one cloud provider to another. The return on time and career investment is significant, as architects capable of building automated data environments often command higher salaries and hold more strategic influence within their organizations. Adoption across finance, healthcare, and retail sectors ensures a steady demand for these specialized skills.

CDOA – Certified DataOps Architect Certification Overview

The program is delivered through a structured curriculum and hosted on the official platform to ensure consistent quality across all learning modules. The assessment approach is designed to be practical, moving beyond simple multiple-choice questions to evaluate how a candidate handles real-world architectural challenges.

The certification is structured to provide a clear progression of ownership, from understanding basic data flows to managing enterprise-wide data mesh architectures. It emphasizes a hands-on approach, requiring candidates to demonstrate their ability to implement DataOps principles in a simulated production environment. This ensures that the holder of the credential is ready to contribute to a technical team from day one.

CDOA – Certified DataOps Architect Certification Tracks & Levels

The certification is divided into three distinct levels: Foundation, Professional, and Advanced, allowing for a structured growth path. The Foundation level introduces the core vocabulary and concepts, while the Professional level focuses on implementation and tool integration across various platforms.

The Advanced level is reserved for those who can design multi-cloud data strategies and lead organizational change. These tracks are designed to align with career progression, helping a junior engineer move into a senior or lead role over time. Specialization tracks also allow professionals to focus on areas like data security, governance, or large-scale orchestration depending on their specific career goals.

Complete CDOA – Certified DataOps Architect Certification Table

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| DataOps Core | Foundation | Aspiring DataOps Engineers | Basic Data Knowledge | DataOps Principles, CI/CD for Data | 1 |

| DataOps Implementation | Professional | Senior Engineers, DevOps | Foundation Cert | Automated Testing, Pipeline Orchestration | 2 |

| DataOps Strategy | Advanced | Architects, Tech Leads | Professional Cert | Data Mesh, Governance, Multi-cloud Design | 3 |

| Data Reliability | Specialist | SREs, Data Reliability Eng | Professional Cert | Observability, SLOs for Data, Error Budgets | 4 |

Detailed Guide for Each CDOA – Certified DataOps Architect Certification

CDOA – Certified DataOps Architect Foundation

What it is

This level validates a basic understanding of DataOps culture and the primary methodologies used to automate data delivery. It confirms that the individual understands the core differences between traditional data management and modern DataOps.

Who should take it

This is suitable for junior developers, data analysts, and project managers who need to understand the technical lifecycle of data. It serves as an entry point for those pivoting from traditional IT roles.

Skills you’ll gain

- Understanding the DataOps Manifesto and its core principles.

- Identifying bottlenecks in the data development lifecycle.

- Basic knowledge of version control for data schemas.

- Familiarity with continuous integration concepts applied to data pipelines.

Real-world projects you should be able to do

- Documenting a basic automated data workflow for a small team.

- Identifying and reporting on data quality issues using automated scripts.

- Setting up a basic version-controlled repository for SQL scripts.

Preparation plan

- 7–14 days: Focus on vocabulary, the DataOps Manifesto, and core theoretical concepts.

- 30 days: Complete the official training modules and participate in basic lab exercises.

- 60 days: Review case studies of successful DataOps implementations and take practice assessments.

Common mistakes

- Treating DataOps as just another name for DevOps without understanding data-specific challenges.

- Ignoring the cultural aspect and focusing solely on the tools.

- Underestimating the importance of data governance at the foundational level.

Best next certification after this

- Same-track option: CDOA Professional

- Cross-track option: Cloud Practitioner (AWS/Azure/GCP)

- Leadership option: Project Management Professional

CDOA – Certified DataOps Architect Professional

What it is

This certification validates the ability to implement and manage automated data pipelines in a cloud-native environment. It ensures the practitioner can integrate various tools to create a seamless data delivery experience.

Who should take it

This is intended for Senior Data Engineers, DevOps Engineers, and SREs who are responsible for the day-to-day operations of data platforms. It requires a solid technical background.

Skills you’ll gain

- Building and maintaining CI/CD pipelines specifically for data workloads.

- Implementing automated testing for data quality and schema validation.

- Orchestrating complex data workflows using tools like Airflow or Prefect.

- Managing data infrastructure as code (IaC) using Terraform or similar tools.

Real-world projects you should be able to do

- Deploying a fully automated pipeline that moves data from a source to a warehouse with validation checks.

- Implementing an automated rollback mechanism for failed data deployments.

- Setting up comprehensive monitoring and alerting for data pipeline health.

Preparation plan

- 7–14 days: Focus on tool integration and the specifics of data orchestration.

- 30 days: Deep dive into infrastructure as code and automated testing frameworks for data.

- 60 days: Build a complete end-to-end production-grade project and troubleshoot common failure scenarios.

Common mistakes

- Failing to account for data privacy and security during the automation process.

- Creating overly complex pipelines that are difficult for other team members to maintain.

- Neglecting the documentation of the automated processes.

Best next certification after this

- Same-track option: CDOA Master Architect

- Cross-track option: Certified Kubernetes Administrator (CKA)

- Leadership option: Technical Lead / Manager tracks

CDOA – Certified DataOps Architect Master

What it is

This advanced certification validates the expertise required to design large-scale, enterprise-grade data architectures. It focuses on strategy, governance, and the ability to lead a transformational shift in how an organization handles data.

Who should take it

Principal Engineers, Enterprise Architects, and Technical Directors are the primary candidates. It is for those who make high-level decisions regarding technology stacks and organizational structure.

Skills you’ll gain

- Designing Data Mesh and Data Fabric architectures for large organizations.

- Implementing enterprise-wide data governance and compliance automation.

- Optimizing data costs and performance across multi-cloud environments.

- Leading cultural change and establishing DataOps centers of excellence.

Real-world projects you should be able to do

- Designing a multi-region data architecture that complies with global privacy regulations.

- Leading a migration from a monolithic data warehouse to a distributed data mesh.

- Creating a long-term strategic roadmap for an organization’s DataOps maturity.

Preparation plan

- 7–14 days: Review high-level architectural patterns and organizational change management.

- 30 days: Study complex case studies involving global scale and multi-cloud environments.

- 60 days: Draft a comprehensive architectural proposal for a hypothetical enterprise and defend the design choices.

Common mistakes

- Focusing too much on technical details and not enough on business ROI and organizational impact.

- Ignoring the human element of change management in large-scale transformations.

- Failing to plan for long-term scalability and cost management.

Best next certification after this

- Same-track option: Specialized Data Security tracks

- Cross-track option: FinOps Certified Practitioner

- Leadership option: CTO / Chief Data Officer development programs

Choose Your Learning Path

DevOps Path

The DevOps path focuses on integrating data pipelines into existing CI/CD frameworks used for application code. Engineers on this path will learn how to apply the “everything-as-code” philosophy to data schemas, transformations, and migrations. This ensures that application and data updates can happen in lockstep without causing downtime. The goal is to eliminate the friction that usually occurs when a software release requires a manual database change.

DevSecOps Path

This path emphasizes the “Security as Code” aspect within the data lifecycle. It involves automating security checks, PII masking, and encryption within the data pipeline itself. Professionals will learn how to implement automated compliance auditing to ensure that data handling meets regulatory standards like GDPR or HIPAA. This path is essential for organizations operating in highly regulated industries where data leaks carry significant legal and financial risks.

SRE Path

The SRE path focuses on the reliability and observability of data systems. Practitioners learn to define Service Level Objectives (SLOs) for data freshness, quality, and availability. They implement advanced monitoring and self-healing mechanisms to ensure that data platforms remain resilient under heavy load or during infrastructure failures. This path bridges the gap between infrastructure stability and data utility.

AIOps Path

AIOps professionals use the DataOps framework to manage the massive datasets required for operational intelligence. This path focuses on the automation of data collection from various infrastructure logs and metrics to feed AI-driven analysis engines. It ensures that the data used for predictive maintenance and automated incident response is accurate, timely, and reliable. This is a critical path for modernizing IT operations in large-scale environments.

MLOps Path

The MLOps path is specifically tailored for the machine learning lifecycle, where data versioning is just as important as model versioning. It involves building pipelines that can handle the unique requirements of feature stores and model training datasets. Professionals learn how to automate the retraining and redeployment of models based on data drift and performance metrics. This ensures that AI models remain accurate as real-world data changes over time.

DataOps Path

This is the core specialized path focused entirely on the architectural efficiency of data delivery. It covers the end-to-end journey of data from source systems to the final consumer, focusing on reducing cycle time and increasing quality. Professionals on this path become experts in data orchestration, transformation, and distribution. It is the primary path for those who want to lead the data revolution within their organizations.

FinOps Path

The FinOps path focuses on the cloud economics of data management. As data volumes grow, the cost of storage and egress can become a major burden for an organization. This path teaches professionals how to design data architectures that are cost-effective without sacrificing performance. It involves implementing automated cost monitoring and optimizing data lifecycle policies to ensure maximum ROI for every byte stored in the cloud.

Role → Recommended CDOA – Certified DataOps Architect Certifications

| Role | Recommended Certifications |

| DevOps Engineer | CDOA Foundation, CDOA Professional |

| SRE | CDOA Professional, Data Reliability Specialist |

| Platform Engineer | CDOA Professional, CDOA Master Architect |

| Cloud Engineer | CDOA Foundation, CDOA Professional |

| Security Engineer | CDOA Foundation, DevSecOps Specialist |

| Data Engineer | CDOA Professional, CDOA Master Architect |

| FinOps Practitioner | CDOA Foundation, FinOps Specialist |

| Engineering Manager | CDOA Foundation, CDOA Master Architect |

Next Certifications to Take After CDOA – Certified DataOps Architect

Same Track Progression

Deep specialization involves moving from the architectural level into niche domains like Data Security or Advanced Data Modeling. After completing the Master Architect level, an individual might pursue research-based credentials or contribute to open-source DataOps tooling. This path ensures the professional remains at the absolute cutting edge of the industry.

Cross-Track Expansion

Broadening your skills often means looking toward Kubernetes for containerized data workloads or deep-diving into a specific cloud provider’s data services. Expanding into FinOps or DevSecOps allows a DataOps Architect to become a multi-faceted leader who understands the financial and security implications of their designs. This makes the professional more versatile and valuable to a broader range of organizations.

Leadership & Management Track

For those moving into management, certifications in technical leadership or enterprise architecture are the logical next steps. This transition involves shifting focus from “how” to build systems to “why” certain systems should be built to meet business objectives. It prepares a professional for roles such as Head of Data Engineering or Chief Technology Officer.

Training & Certification Support Providers for CDOA – Certified DataOps Architect

DevOpsSchool provides a comprehensive ecosystem for learners, offering deep-dive sessions and practical labs that mirror real-world industry challenges. Their approach focuses on creating production-ready engineers who understand the nuances of automation and culture. The school serves as a central hub for various specialized tracks, ensuring that students have access to the latest curriculum and mentor support throughout their learning journey.

Cotocus specializes in hands-on technical training with a strong focus on implementation and tool mastery. They provide a lab-heavy environment where students can experiment with different DataOps tools and configurations without risk to production systems. This provider is ideal for professionals who prefer “learning by doing” and want to gain immediate technical proficiency in the latest cloud-native technologies.

Scmgalaxy is a prominent resource for professionals looking for extensive documentation, community support, and project-based learning. They offer a wealth of information on software configuration management and how it integrates into the broader DataOps landscape. Their training modules are designed to bridge the gap between legacy systems and modern automated environments, making them a preferred choice for mid-career professionals.

BestDevOps focuses on the delivery of high-quality certification prep and career guidance. They provide curated paths that help students navigate the complex world of modern certifications, ensuring they choose the right tracks for their specific career goals. Their programs emphasize the strategic value of DevOps and DataOps, helping candidates articulate their worth to potential employers and stakeholders.

Devsecopsschool.com is the go-to provider for those looking to integrate security into every stage of the development and data lifecycle. Their curriculum is built around the “Security as Code” philosophy, teaching students how to automate compliance and protection. They provide the tools and knowledge necessary to build resilient systems that can withstand modern security threats while maintaining high delivery speeds.

Sreschool.com offers specialized training focused on the principles of site reliability engineering as they apply to complex data systems. Their courses cover observability, incident response, and the management of high-availability architectures. This provider is essential for engineers who want to ensure that their data platforms are not only automated but also remarkably stable and performant under all conditions.

Aiopsschool.com addresses the growing intersection of artificial intelligence and IT operations. They provide training on how to use automated data collection and analysis to drive operational efficiency. Students learn how to build systems that can predict and prevent issues before they impact the business, making this a vital resource for professionals working in large-scale enterprise environments.

Dataopsschool.com is the primary host for the CDOA certification and offers the most direct and specialized curriculum for aspiring DataOps Architects. Their programs are designed by industry veterans who understand the practical challenges of managing data at scale. They provide an end-to-end learning experience that covers everything from foundational theory to advanced enterprise-level architectural strategy.

Finopsschool.com focuses on the critical area of cloud financial management, teaching professionals how to balance technical performance with cost efficiency. Their training is essential for anyone responsible for large-scale data storage and processing budgets. They provide the frameworks necessary to implement transparent cost reporting and optimization strategies that align with business financial goals.

Frequently Asked Questions (General)

- How difficult is it to get certified in DataOps?

The difficulty depends on your prior experience with cloud systems and data engineering. While the foundation level is accessible, professional and master levels require a deep technical understanding of automation and architecture.

- How long does the certification process take?

A motivated learner can complete the foundation level in about 30 days. Higher levels typically require 60 to 90 days of dedicated study and practical hands-on experience to master the complex concepts.

- Are there any specific prerequisites for the foundation level?

There are no formal prerequisites, but having a basic understanding of databases, SQL, and general software development lifecycles will significantly help you progress faster through the initial modules.

- What is the return on investment for this certification?

Professionals often see a significant salary increase and access to more senior roles. For an organization, the ROI comes from faster data delivery, reduced error rates, and more efficient use of cloud resources.

- Is this certification recognized globally?

Yes, the principles taught are universal and based on industry-standard practices. It is highly regarded by major tech firms and enterprises in India, the US, Europe, and other major global markets.

- Can I skip the foundation level and go straight to professional?

It is generally recommended to follow the sequence to ensure a solid grasp of the core philosophy. However, those with extensive documented industry experience may be able to challenge the higher-level exams directly.

- How often do I need to renew my certification?

To ensure you stay current with evolving technology, most certifications in this track require renewal every two to three years. This often involves taking an updated exam or demonstrating ongoing professional development.

- Is the exam theoretical or practical?

The exams are designed to be practical. While there are theoretical components, the focus is on assessing your ability to solve real-world architectural problems and implement automated solutions effectively.

- What tools should I be familiar with?

While the certification is architecturally focused, familiarity with tools like Jenkins, GitLab CI, Airflow, Terraform, and various cloud data warehouses (like Snowflake or BigQuery) is highly beneficial.

- Do I need to be a coder to pursue this?

You should be comfortable with scripting and basic programming. You don’t need to be a software developer, but understanding code is essential for implementing “data-as-code” and automation.

- Does the certification cover multi-cloud strategies?

Yes, the professional and master levels specifically address how to manage data pipelines and architectures across different cloud providers like AWS, Azure, and Google Cloud.

- Are there community groups for certified professionals?

Yes, holding this certification gives you access to an exclusive network of professionals and mentors. This community is a valuable resource for troubleshooting, career advice, and staying updated on industry trends.

FAQs on CDOA – Certified DataOps Architect

- What makes the CDOA different from a standard Data Engineering certificate?

The CDOA focuses on the “Ops” or operational side, emphasizing automation, reliability, and architectural scale rather than just data modeling or querying skills. It is about the pipeline, not just the data.

- Does the CDOA cover data governance?

Yes, governance is a core pillar. The certification teaches how to automate governance tasks like PII masking and access control, ensuring that compliance is part of the automated pipeline.

- How does CDOA help with career transition?

It provides a bridge for DevOps engineers to move into the data space and for Data Engineers to move into high-level architectural and operational leadership roles within their companies.

- Is Kubernetes knowledge required for CDOA?

While not strictly required for the foundation, it is highly recommended for the professional and master levels as many modern DataOps platforms are deployed on containerized infrastructure.

- Does the certification teach specific cloud provider tools?

It focuses on architectural principles that apply to all clouds. However, labs often use popular tools from AWS, Azure, and GCP to demonstrate how these principles are applied in practice.

- Can a manager benefit from the CDOA?

Absolutely. The foundation and master levels are particularly useful for managers to understand how to structure teams, choose technology stacks, and measure the success of data initiatives.

- How does CDOA address data quality?

A major focus of the certification is building automated testing frameworks that check for data quality, schema drift, and integrity at every stage of the delivery pipeline.

- Is there a focus on cost optimization?

Yes, particularly at the master level, where architects are expected to design systems that are both high-performing and cost-efficient in a cloud-billing environment.

Final Thoughts: Is CDOA – Certified DataOps Architect Worth It?

From the perspective of a mentor who has watched the industry evolve through several cycles, the answer is a practical yes. The complexity of data management is not going to decrease, and the organizations that win will be those that can deliver high-quality data at the speed of their business. This certification provides the mental models and technical frameworks needed to be at the center of that success.

It is not a magic bullet that will fix a broken culture overnight, but it gives you the language and the tools to start making real improvements. If you are looking for a way to future-proof your career and move into a strategic technical role, the path of a DataOps Architect is one of the most stable and rewarding choices you can make in the current market. Focus on the principles, master the automation, and the career growth will naturally follow.